Integrating with Yandex Data Proc

You can use the Apache Spark™ clusters deployed in Yandex Data Proc, in your Yandex DataSphere projects. To set up integration with Data Proc in DataSphere:

- Prepare your infrastructure.

- Create a bucket.

- Create a Data Proc cluster.

- Set up the DataSphere project.

- Run your computations.

If you no longer need the resources you created, delete them.

Getting started

Before getting started, register in Yandex Cloud, set up a community, and link your billing account to it.

- On the DataSphere home page

- Select the organization for working in Yandex Cloud.

- Create a community.

- Link your billing account to the DataSphere community you are going to work in. Make sure that the billing account is linked and its status is

ACTIVEorTRIAL_ACTIVE. If you do not have a billing account, create one in the DataSphere interface.

Required paid resources

The Data Proc cluster support cost covers the computing resources of the cluster and the storage size (see the Data Proc pricing).

Prepare the infrastructure

Log in to the Yandex Cloud management console

If you have an active billing account, you can create or select a folder to deploy your infrastructure in, on the cloud page

Note

If you use an identity federation to access Yandex Cloud, billing details might be unavailable to you. In this case, contact your Yandex Cloud organization administrator.

Create a folder and network

Create a folder where your Data Proc cluster will run.

- In the management console

- Give your folder a name, e.g.,

data-folder. - Select the Create a default network option. This will create a network with subnets in each availability zone.

- Click Create.

Learn more about clouds and folders.

Create an egress NAT gateway

- In the

data-folderfolder, select Virtual Private Cloud. - In the left-hand panel, select

- Click Create and set the gateway parameters:

- Enter the gateway name, e.g.,

nat-for-cluster. - Gateway Type: Egress NAT.

- Click Save.

- Enter the gateway name, e.g.,

- In the left-hand panel, select

- Click Create and specify the route table parameters:

- Enter the name, e.g.,

route-table. - Select the

data-networknetwork. - Click Add a route.

- In the window that opens, select Gateway in the Next hop field.

- In the Gateway field, select the NAT gateway you created. The destination prefix will be propagated automatically.

- Click Add.

- Enter the name, e.g.,

- Click Create a routing table.

Next, link the route table to a subnet to route traffic from it via the NAT gateway:

- In the left-hand panel, select

- In the line with the subnet you need, click

- In the menu that opens, select Link routing table.

- In the window that opens, select the created table from the list.

- Click Link.

Create a service account for the cluster.

-

Go to the

data-folderfolder. -

In the Service accounts tab, click Create service account.

-

Enter the name of the service account, for example,

sa-for-data-proc. -

Click Add role and assign the following roles to the service account:

dataproc.agentto create and use Data Proc clusters.vpc.userto use the Data Proc cluster network.iam.serviceAccounts.userto create resources in the folder on behalf of the service account.

-

Click Create.

Create an SSH key pair

To ensure a safe connection to the Data Proc cluster hosts, you'll need SSH keys. If you generated SSH keys previously, you can skip this step.

-

Open the terminal.

-

Use the

ssh-keygencommand to create a new key:ssh-keygen -t ed25519After you run the command, you will be asked to specify the names of files where the keys will be saved and enter the password for the private key. Press Enter to use the default name and path suggested by the command.

The key pair will be created in the current directory. The public key will be saved in a

.pubfile.

If you do not have OpenSSH

-

Run

cmd.exeorpowershell.exe(make sure to update PowerShell first). -

Use the

ssh-keygencommand to create a new key. Run this command:ssh-keygen -t ed25519After you run the command, you will be asked to specify the names of files where the keys will be saved and enter the password for the private key. Press Enter to use the default name and path suggested by the command.

The key pair will be created in the current directory. The public key will be saved in a

.pubfile.

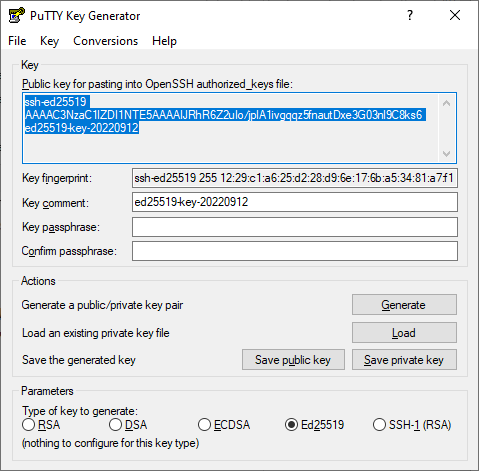

Create keys using the PuTTY app:

-

Download

-

Make sure that the directory where you installed PuTTY is included in

PATH:- Right-click My computer. Click Properties.

- In the window that opens, select Additional system parameters, then Environment variables (located in the lower part of the window).

- Under System variables, find

PATHand click Edit. - In the Variable value field, append the path to the directory where you installed PuTTY.

-

Launch the PuTTYgen app.

-

Select EdDSA as the pair type to generate. Click Generate and move the cursor in the field above it until key creation is complete.

-

In Key passphrase, enter a strong password. Enter it again in the field below.

-

Click Save private key and save the private key. Do not share its key phrase with anyone.

-

Save the key to a text file. To do this, copy the single-line public key from the text field to a text file named

id_ed25519.pub.

Warning

Save the private key in a secure location, as you will not be able to connect to the VM without it.

Configure DataSphere

To work with Data Proc clusters in DataSphere, create and set up a project.

Create a project

- Open the DataSphere home page

- In the left-hand panel, select

- Select the community to create a project in.

- On the community page, click

- In the window that opens, enter a name and description (optional) for the project.

- Click Create.

Edit the project settings

-

Go to the Settings tab.

-

Under Advanced settings, click

-

Specify the parameters:

-

Default folder:

data-folder. -

Service account:

sa-for-data-proc. -

Subnet: A subnet of the

ru-central1-aavailability zone in thedata-folderfolder.Note

If you specified a subnet in the project settings, the time to allocate computing resources may be increased.

-

Security groups if you use them in your organization.

-

-

Click Save.

Create a bucket

- In the management console

- In the list of services, select Object Storage.

- Click Create bucket.

- In the ** Name** field, enter a name for the bucket.

- In the Object read access, Object listing access, and Read access to settings fields, select Restricted.

- Click Create bucket.

Create a Data Proc cluster

Before creating a cluster, make sure that your cloud has enough total SSD space (200 GB is allocated for a new cloud by default).

You can view your current resources under Quotas

-

In the management console

-

Click Create resource and select Data Proc cluster from the drop-down list.

-

Enter a name for the cluster in the Cluster name field. It must be unique within the folder.

-

In the Version field, select

2.0. -

In the Services field, select:

LIVY,SPARK,YARN, andHDFS. -

Enter the public part of your SSH key in the SSH key field.

-

In the Service account field, select

sa-for-data-proc. -

In the Availability zone field, select

ru-central1-a. -

If required, set the properties of Hadoop and its components in the Properties field, such as:

hdfs:dfs.replication : 2 hdfs:dfs.blocksize : 1073741824 spark:spark.driver.cores : 1The available properties are listed in the official documentation for the components -

Select the created bucket in the Bucket name field.

-

Select a network for the cluster.

-

Enable the UI Proxy option to access the web interfaces of Data Proc components.

-

Configure subclusters: no more than one main subcluster with a Master host and subclusters for data storage or computing.

Note

To run computations on clusters, make sure you have at least one

ComputeorDatasubcluster.The roles of

ComputeandDatasubcluster are different: you can deploy data storage components onDatasubclusters, and data processing components onComputesubclusters. Storage on aComputesubcluster is only used to temporarily store processed files. -

For each subcluster, you can configure:

- Number of hosts.

- Host class: Platform and computing resources available to the host.

- Storage size and type.

- Subnet of the network where the cluster is located.

-

For

Computesubclusters, you can specify the autoscaling parameters. -

When you have set up all the subclusters, click Create cluster.

Data Proc runs the create cluster operation. After the cluster status changes to Running, you can connect to any active subcluster using the specified SSH key.

The Data Proc cluster you created will be added to your DataSphere project under Project resources ⟶ Data Proc ⟶ Available clusters.

Run your computations on the cluster

-

Open the DataSphere project:

-

Select the relevant project in your community or on the DataSphere homepage

- Click Open project in JupyterLab and wait for the loading to complete.

- Open the notebook tab.

-

-

In the cell, insert the code to compute. For example:

#!spark --cluster <cluster_name> import random def inside(p): x, y = random.random(), random.random() return x*x + y*y < 1 NUM_SAMPLES = 1_000_000 count = sc.parallelize(range(0, NUM_SAMPLES)) \ .filter(inside).count() print("Pi is roughly %f" % (4.0 * count / NUM_SAMPLES))Where

#!spark --cluster <cluster_name>is a mandatory system command to run computations on a cluster.Wait for the computation to start. While it is in progress, you'll see logs under the cell.

-

Write data to S3 by specifying the bucket name:

#!spark data = [[1, "tiger"], [2, "lion"], [3, "snow leopard"]] df = spark.createDataFrame(df, schema="id LONG, name STRING") df.repartition(1).write.option("header", True).csv("s3://<bucket_name>/") -

Run the cells. To do this, select Run ⟶ Run Selected Cells or press Shift + Enter.

The file will appear in the bucket. To view bucket contents in the JupyterLab interface, create and activate an S3 connector in your project.

Note

To get more than 100 MB of the Data Proc cluster data, use an S3 connector.

To learn more about running computations in the Data Proc clusters in DataSphere, see Computing sessions.

Delete the resources you created

Warning

As a user of a cluster deployed in Data Proc, you manage its lifecycle yourself. The cluster will run, and you will be charged for it until you shut it down.

To stop paying for the resources you created:

- Delete the objects from the bucket.

- Delete the bucket.

- Delete the cluster.

- Delete your project.