Creating an MLFlow server for logging experiments and artifacts

This tutorial describes how to deploy an MLFlow Tracking Server

To create an MLFlow server for logging JupyterLab Notebook experiments and artifacts:

- Prepare your infrastructure.

- Create a static access key.

- Create an SSH key pair.

- Create a VM.

- Create a managed DB.

- Create a bucket.

- Install the MLFlow tracking server and add it to the VM autoload.

- Create secrets.

- Train the model.

If you no longer need the resources you created, delete them.

Getting started

Before getting started, register in Yandex Cloud, set up a community, and link your billing account to it.

- On the DataSphere home page

- Select the organization for working in Yandex Cloud.

- Create a community.

- Link your billing account to the DataSphere community you are going to work in. Make sure that the billing account is linked and its status is

ACTIVEorTRIAL_ACTIVE. If you do not have a billing account, create one in the DataSphere interface.

Required paid resources

The cost of training a model based on data from Object Storage includes:

- Fee for DataSphere computing resource usage.

- Fee for Compute Cloud computing resource usage.

- Fee for a running Managed Service for PostgreSQL cluster.

- Fee for storing data in a bucket (see Object Storage pricing).

- Fee for data operations (see Object Storage pricing).

Prepare the infrastructure

Log in to the Yandex Cloud management console

If you have an active billing account, you can create or select a folder to deploy your infrastructure in, on the cloud page

Note

If you use an identity federation to access Yandex Cloud, billing details might be unavailable to you. In this case, contact your Yandex Cloud organization administrator.

Create a folder

- In the management console

- Give your folder a name, e.g.,

data-folder. - Click Create.

Create a service account for Object Storage

To access a bucket in Object Storage, you need a service account with the storage.viewer and storage.uploader roles.

- In the management console

data-folder. - In the Service accounts tab, click Create service account.

- Enter a name for the service account, e.g.,

datasphere-sa. - Click Add role and assign the service account the

storage.viewerandstorage.uploaderroles. - Click Create.

Create a static access key

To access Object Storage from DataSphere, you need a static key.

- In the management console

- At the top of the screen, go to the Service accounts tab.

- Select the

datasphere-saservice account. - In the top panel, click

- Select Create static access key.

- Specify the key description and click Create.

- Save the ID and private key. After you close the dialog, the private key value will become unavailable.

-

Create an access key for the

datasphere-saservice account:yc iam access-key create --service-account-name datasphere-saResult:

access_key: id: aje6t3vsbj8l******** service_account_id: ajepg0mjt06s******** created_at: "2022-07-18T14:37:51Z" key_id: 0n8X6WY6S24N7Oj***** secret: JyTRFdqw8t1kh2-OJNz4JX5ZTz9Dj1rI9hx***** -

Save the ID (

key_id) and secret key (secret). You will not be able to get the key value again.

Create an SSH key pair

To connect to a VM over SSH, you need a key pair: the public key resides on the VM, while the private one is kept by the user. This method is more secure than connecting with login and password.

Note

SSH connections using a login and password are disabled by default on public Linux images that are provided by Yandex Cloud.

To create a key pair:

-

Open the terminal.

-

Use the

ssh-keygencommand to create a new key:ssh-keygen -t ed25519After you run the command, you will be asked to specify the names of files where the keys will be saved and enter the password for the private key. Press Enter to use the default name and path suggested by the command.

The key pair will be created in the current directory. The public key will be saved in a

.pubfile.

If you do not have OpenSSH

-

Run

cmd.exeorpowershell.exe(make sure to update PowerShell first). -

Use the

ssh-keygencommand to create a new key. Run this command:ssh-keygen -t ed25519After you run the command, you will be asked to specify the names of files where the keys will be saved and enter the password for the private key. Press Enter to use the default name and path suggested by the command.

The key pair will be created in the current directory. The public key will be saved in a

.pubfile.

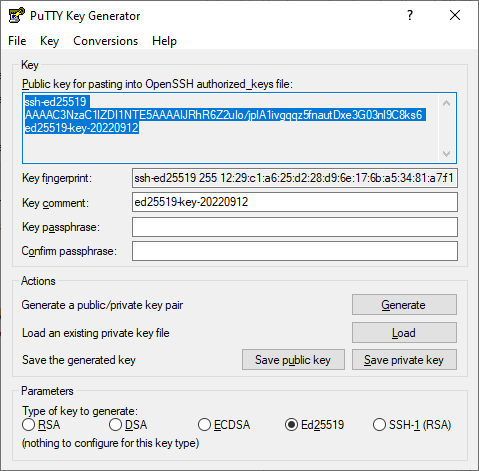

Create keys using the PuTTY app:

-

Download

-

Make sure that the directory where you installed PuTTY is included in

PATH:- Right-click My computer. Click Properties.

- In the window that opens, select Additional system parameters, then Environment variables (located in the lower part of the window).

- Under System variables, find

PATHand click Edit. - In the Variable value field, append the path to the directory where you installed PuTTY.

-

Launch the PuTTYgen app.

-

Select EdDSA as the pair type to generate. Click Generate and move the cursor in the field above it until key creation is complete.

-

In Key passphrase, enter a strong password. Enter it again in the field below.

-

Click Save private key and save the private key. Do not share its key phrase with anyone.

-

Save the key to a text file. To do this, copy the single-line public key from the text field to a text file named

id_ed25519.pub.

Create a VM

- In the management console

- In the list of services, select Compute Cloud.

- Click Create virtual machine.

- Under Basic parameters:

- Enter a name for the VM, e.g.,

mlflow-vm. - Select the

ru-central1-aavailability zone.

- Enter a name for the VM, e.g.,

- Under Image/boot disk selection, select

Ubuntu 22.04. - Under Disks and file storages, select the Disks tab and configure the boot disk:

- Type:

SSD - Size:

20 GB

- Type:

- Under Computing resources:

- vCPU:

2 - RAM:

4

- vCPU:

- Under Network settings, select the subnet that is specified in the DataSphere project settings. Make sure to set up a NAT gateway for the subnet.

- Under Access:

- Service account:

datasphere-sa - Enter the username in the Login field.

- In the SSH key field, paste the contents of the public key file.

- Service account:

- Click Create VM.

Create a managed DB

- In the management console

- Select Managed Service for PostgreSQL.

- Click Create cluster.

- Enter a name for the cluster, e.g.,

mlflow-bd. - Under Host class, select the

s3-c2-m8configuration. - Under Size of storage, select

250 GB. - Under Database, enter your username and password. You will need it to establish a connection.

- Under Hosts, select the

ru-central1-aavailability zone. - Click Create cluster.

- Go to the DB you created and click Connect.

- Save the host link from the

hostfield: you will need it to establish a connection.

Create a bucket

- In the management console

- In the list of services, select Object Storage.

- At the top right, click Create bucket.

- In the ** Name** field, enter a name for the bucket, e.g.,

mlflow-bucket. - In the Object read access, Object listing access, and Read access to settings fields, select Restricted.

- Click Create bucket.

- To create a folder for MLflow artifacts, open the bucket you created and click Create folder.

- Enter a name for the folder, e.g.,

artifacts.

Install the MLFlow tracking server and add it to the VM autoload

-

Connect to the VM via SSH.

-

Download the

Anacondadistribution:curl -O https://repo.anaconda.com/archive/Anaconda3-2023.07-1-Linux-x86_64.sh -

Run its installation:

bash Anaconda3-2023.07-1-Linux-x86_64.shWait for the installation to complete and restart the shell.

-

Create an environment:

conda create -n mlflow -

Activate the environment:

conda activate mlflow -

Install the required packages by running the following commands one by one:

conda install -c conda-forge mlflow conda install -c anaconda boto3 pip install psycopg2-binary pip install pandas -

Create environment variables for S3 access:

-

Open the file with the variables:

sudo nano /etc/environment -

Add the following lines to the file by substituting your VM's internal IP address:

MLFLOW_S3_ENDPOINT_URL=https://storage.yandexcloud.net/ MLFLOW_TRACKING_URI=http://<VM_internal_IP>:8000

-

-

Specify the data to be used by the

boto3library for S3 access:-

Create a directory named

.aws:mkdir ~/.aws -

Create a

credentialsfile:nano ~/.aws/credentials -

Add the following lines to the file by substituting the static key ID and value:

[default] aws_access_key_id=<static_key_ID> aws_secret_access_key=<secret_key>

-

-

Run the MLFlow Tracking Server by substituting your cluster data:

mlflow server --backend-store-uri postgresql://<username>:<password>@<host>:6432/db1?sslmode=verify-full --default-artifact-root s3://mlflow-bucket/artifacts -h 0.0.0.0 -p 8000You can check your connection to MLFlow at

http://<VM_public_IP>:8000.

Enable MLFlow autorun

For MLFlow to run automatically after the VM restarts, make it the Systemd service.

-

Create directories for storing logs and error details:

mkdir ~/mlflow_logs/ mkdir ~/mlflow_errors/ -

Create a file named

mlflow-tracking.service:sudo nano /etc/systemd/system/mlflow-tracking.service -

Add the following lines to the file, replacing the placeholders with your data:

[Unit] Description=MLflow Tracking Server After=network.target [Service] Environment=MLFLOW_S3_ENDPOINT_URL=https://storage.yandexcloud.net/ Restart=on-failure RestartSec=30 StandardOutput=file:/home/<VM_username>/mlflow_logs/stdout.log StandardError=file:/home/<VM_username>/mlflow_errors/stderr.log User=<VM_username> ExecStart=/bin/bash -c 'PATH=/home/<VM_username>/anaconda3/envs/mlflow_env/bin/:$PATH exec mlflow server --backend-store-uri postgresql://<DB_username>:<password>@<host>:6432/db1?sslmode=verify-full --default-artifact-root s3://mlflow-bucket/artifacts -h 0.0.0.0 -p 8000' [Install] WantedBy=multi-user.targetWhere:

<VM_username>: VM account username.<DB_username>: Username specified when creating a database cluster.

-

Run the service and enable autoload at system startup:

sudo systemctl daemon-reload sudo systemctl enable mlflow-tracking sudo systemctl start mlflow-tracking sudo systemctl status mlflow-tracking

Create secrets

-

Select the relevant project in your community or on the DataSphere homepage

- Under Project resources, click

- Click Create.

- In the Name field, enter the secret name:

MLFLOW_S3_ENDPOINT_URL. - In the Value field, paste this URL:

https://storage.yandexcloud.net/. - Click Create.

- Create three more secrets:

MLFLOW_TRACKING_URIset tohttp://<VM_internal_IP>:8000.AWS_ACCESS_KEY_IDwith the static key ID.AWS_SECRET_ACCESS_KEYwith the static key value.

Train the model

The example uses a set of data to forecast the quality of wine based on quantitative characteristics, such as acidity, PH, residual sugar, etc. To train the model, copy and paste the code into notebook cells.

-

Open the DataSphere project:

-

Select the relevant project in your community or on the DataSphere homepage

- Click Open project in JupyterLab and wait for the loading to complete.

- Open the notebook tab.

-

-

Install the required modules:

%pip install mlflow -

Import the required libraries:

import os import warnings import sys import pandas as pd import numpy as np from sklearn.metrics import mean_squared_error, mean_absolute_error, r2_score from sklearn.model_selection import train_test_split from sklearn.linear_model import ElasticNet from urllib.parse import urlparse import mlflow import mlflow.sklearn from mlflow.models import infer_signature import logging -

Create an experiment in MLFlow:

mlflow.set_experiment("my_first_experiment") -

Create a function to assess the forecast quality:

def eval_metrics(actual, pred): rmse = np.sqrt(mean_squared_error(actual, pred)) mae = mean_absolute_error(actual, pred) r2 = r2_score(actual, pred) return rmse, mae, r2 -

Prepare data, train the model, and register it with MLflow:

logging.basicConfig(level=logging.WARN) logger = logging.getLogger(__name__) warnings.filterwarnings("ignore") np.random.seed(40) # Upload the dataset to assess the quality of wine csv_url = ( "https://raw.githubusercontent.com/mlflow/mlflow/master/tests/datasets/winequality-red.csv" ) try: data = pd.read_csv(csv_url, sep=";") except Exception as e: logger.exception( "Unable to download training & test CSV, check your internet connection. Error: %s", e ) # Split the dataset into training and test samples train, test = train_test_split(data) # Distinguish a target variable and variables used for forecasting train_x = train.drop(["quality"], axis=1) test_x = test.drop(["quality"], axis=1) train_y = train[["quality"]] test_y = test[["quality"]] alpha = 0.5 l1_ratio = 0.5 # Start a run in MLflow with mlflow.start_run(): # Create an ElasticNet model and train it lr = ElasticNet(alpha=alpha, l1_ratio=l1_ratio, random_state=42) lr.fit(train_x, train_y) # Predict the quality based on the test sample predicted_qualities = lr.predict(test_x) (rmse, mae, r2) = eval_metrics(test_y, predicted_qualities) print("Elasticnet model (alpha={:f}, l1_ratio={:f}):".format(alpha, l1_ratio)) print(" RMSE: %s" % rmse) print(" MAE: %s" % mae) print(" R2: %s" % r2) # Log information about hyperparameters and quality metrics in MLflow mlflow.log_param("alpha", alpha) mlflow.log_param("l1_ratio", l1_ratio) mlflow.log_metric("rmse", rmse) mlflow.log_metric("r2", r2) mlflow.log_metric("mae", mae) predictions = lr.predict(train_x) signature = infer_signature(train_x, predictions) tracking_url_type_store = urlparse(mlflow.get_tracking_uri()).scheme # Register the model with MLflow if tracking_url_type_store != "file": mlflow.sklearn.log_model( lr, "model", registered_model_name="ElasticnetWineModel", signature=signature ) else: mlflow.sklearn.log_model(lr, "model", signature=signature)You can check the result at

http://<VM_public_IP>:8000.

How to delete the resources you created

To stop paying for the resources you created:

- Delete the VM.

- Delete the database cluster.

- Delete the objects from the bucket.

- Delete the bucket.

- Delete the project.